- The Daily AI Show Newsletter

- Posts

- The Daily AI Show: Issue #91

The Daily AI Show: Issue #91

Brown bear, brown bear, what do you see?

Welcome to Issue #91

Coming Up:

Benchmark Wins Matter Less Than Workflow Wins

The Economics of AI Are Changing Fast

The Quiet Rise of Edge AI

Plus, we discuss brown bears, if AI is helping when it reduces anger, the threat of AI making war decisions, and all the news we found interesting this week.

It’s Sunday.

If you are in the US, you probably just lost an hour of sleep to Daylight Savings.

BUT, on the bright side, it will still be plenty light tonight. . .when you are exhausted.

Maybe between now and then, you can do a little lite reading.

Oh hey, we have just the thing.

Enjoy!

The DAS Crew

Our Top AI Topics This Week

Benchmark Wins Matter Less Than Workflow Wins

GPT-5.4 landed with strong benchmark numbers, especially on OpenAI’s GDPval test for professional work. OpenAI says GPT-5.4 reaches 83.0% on GDPval, up from 70.9% for GPT-5.2, and also improves on several coding, computer use, and tool use evaluations.

That kind of jump matters because GDPval tries to measure real office work instead of puzzle solving. The benchmark spans 44 occupations and focuses on tasks closer to finance, legal work, operations, and other white collar workflows. Ethan Mollick highlighted the same point when he described GDPval as a meaningful measure of professional task performance and noted that GPT-5.4 now ties or beats humans on a large share of those tasks.

But the more important takeaway sits one level below the leaderboard.

The latest model releases show how fragmented “best model” has become. GPT-5.4 leads or stays near the top on some professional and computer use tasks. Gemini 3.1 Pro Preview still leads key knowledge and hallucination benchmarks in Artificial Analysis, including AA-Omniscience, and continues to score extremely well on broad reasoning tests. Artificial Analysis also reported that Gemini 3.1 Pro Preview leads 6 of the 10 evaluations in its Intelligence Index.

That creates a practical shift for teams using AI every day.

You no longer win by picking one model and declaring the matter settled. You win by matching the model to the job. A team doing spreadsheet modeling, coding, contract review, multimodal analysis, or long context research may reach different conclusions about which model performs best because those tasks stress different strengths. Benchmarks help narrow the field. They do not replace hands-on testing.

This is why the next phase of AI adoption looks more operational than promotional.

Teams need clear test cases from their own work. They need side by side comparisons on the workflows that drive revenue, save time, or reduce risk. They need to track quality, speed, reliability, and cost across those workflows. A benchmark chart can point you in the right direction. It cannot tell you whether a model handles your CRM exports, your legal redlines, your financial tabs, or your internal research format better than the alternatives.

That shift also changes how model vendors compete.

Labs still want leaderboard wins because those headlines travel fast. Buyers are moving toward a different question. Which model performs best inside my process, with my data, under my constraints, at my budget?

That is a better question.

And it is harder to market around.

The companies that build strong evaluation habits will make better AI decisions than the companies that chase every new release cycle. In this market, disciplined testing creates more leverage than model loyalty.

The Economics of AI Are Changing Fast

For most of the past two years, AI adoption followed a simple pattern.

People paid twenty dollars a month or similar for access.

ChatGPT Plus set the standard in 2023. Most AI products copied the same structure. A low monthly fee unlocked stronger models, faster responses, and higher limits. That price made AI feel like another SaaS tool. Cheap enough to test. Cheap enough for individuals.

That pricing model is now shifting.

Advanced AI tools increasingly sit in the one hundred to two hundred dollar range. Anthropic offers a $100 Claude Max tier. OpenAI offers a $200 Pro plan for heavy model usage. Several coding agents charge similar prices once usage reaches serious levels.

The jump in price looks dramatic, but consider the value calculation is also shifting at the same time.

People are talking more about how they measure AI subscriptions against time saved.

A developer who spends three hours debugging code may cut that work to twenty minutes with a coding agent. A sales team researching prospects may replace manual research with automated workflows. A founder building a prototype may move from concept to working product in an afternoon.

When AI removes hours of work, the pricing comparison changes.

One hundred dollars per month equals roughly three dollars per day.

For someone whose time carries professional value, the cost becomes trivial if the tool saves even a few minutes each day. A single automated workflow or coding task often covers the entire monthly fee.

That shift explains why higher tiers continue to gain adoption.

AI tools now behave less like software subscriptions and more like digital labor. Users do not evaluate them like note taking apps or project management tools. They evaluate them like assistants.

Enterprise data supports the trend.

Several developer surveys in early 2026 show engineers increasing their use of AI coding tools despite rising subscription costs. Internal reports from major AI companies show power users moving quickly toward higher tiers once they begin building real workflows with agents or automation. Once someone integrates AI into daily work, lower tiers often become restrictive.

Usage limits appear faster.

Tasks require longer reasoning runs.

Large projects burn through tokens.

This pattern mirrors the evolution of cloud computing.

Early cloud services competed on low cost storage and compute. As companies began running critical workloads in the cloud, pricing shifted toward performance and scale rather than cheap access. AI tools now follow the same trajectory. The next generation of users will not ask whether AI costs twenty dollars. They will ask how much time the system gives back each week.

That question turns a subscription into infrastructure.

And infrastructure rarely stays cheap.

The Quiet Rise of Edge AI

A major shift in AI development is happening quietly.

For most of the last two years, advanced AI required massive cloud infrastructure. Companies relied on large frontier models running in enormous data centers. If you wanted strong reasoning or agent behavior, you needed access to expensive compute.

That assumption is starting to break.

New open models continue shrinking while gaining capabilities that previously required much larger systems. One recent example involves the latest releases in the Qwen model family, where researchers pushed advanced reasoning and agent style behavior into models small enough to run locally on personal hardware.

That shift matters more than most people realize.

Large models will continue to dominate frontier performance. But smaller models introduce a different advantage. They run directly on phones, laptops, and local machines without sending data to a remote server. That opens the door to a different architecture for AI applications. Instead of calling a cloud API every time a task appears, a system can run intelligence directly at the edge.

Edge AI has existed for years in limited forms. Image recognition models on smartphones and embedded systems represent early versions. The difference now comes from reasoning capability. These smaller models can plan tasks, interact with tools, and execute multi step processes.

That combination turns a local model into a lightweight agent.

Developers already experiment with this pattern. A local model handles planning and orchestration. Cloud models step in only when heavier reasoning or large scale retrieval becomes necessary. That hybrid architecture reduces cost and latency while keeping sensitive data local. Open models accelerate this trend.

Unlike proprietary systems, open models allow developers to modify weights, adjust training approaches, and optimize performance for specific hardware environments. That flexibility drives rapid innovation across the community.

It also intensifies competition.

Open model teams move quickly because they do not wait for centralized release cycles. Improvements spread across repositories and research labs within weeks. Companies building commercial systems then integrate those improvements into products.

The result is a feedback loop. Open models push efficiency forward. Commercial products push usability forward. Both forces move the industry faster.

Smaller models will not replace frontier systems anytime soon. Large reasoning models still lead on complex tasks and advanced research problems. But shrinking capability into portable systems changes how AI fits into everyday computing.

The most interesting AI applications in the next few years may not live in the cloud.

They may live directly on the devices people carry every day.

Just Jokes

AI For Good

Researchers have developed a new AI tool that can recognize individual brown bears, helping scientists track their behavior and movement patterns in the wild without invasive tagging. The system analyzes images and video from camera traps and automatically identifies specific bears based on visual features, allowing ecologists to monitor populations much faster than manual review.

By saving researchers large amounts of time and improving tracking accuracy, the tool helps conservation teams better understand how bears use their habitat and respond to environmental changes, which supports more effective wildlife protection strategies.

This Week’s Conundrum

A difficult problem or question that doesn't have a clear or easy solution.

The Catharsis Loop Conundrum

Public agencies and large service centers sit on a constant backlog of frustration. Benefits, healthcare claims, school bureaucracy, billing disputes, outages, policy confusion. Demand keeps rising while staffing and training lag. AI changes the interface first. Organizations now deploy “empathetic buffer layers,” agents tuned to listen, reflect emotion, summarize the issue, and guide next steps. They respond instantly, stay calm, and carry a conversation longer than any overworked human rep. For many people, that matters. A parent trying to fix a school placement issue at 9:30 pm or a patient staring at an insurance denial needs clarity and emotional steadiness more than another hold queue.

The problem is that this new interface does more than reduce wait times. It absorbs heat. It turns anger into a managed conversation, then routes the case into the same slow back-end. Over time, leaders can point to “improved customer satisfaction” while the underlying system stays broken. The pain still exists, but the feedback stops looking like pain. Complaints become neatly structured tickets, and public outrage becomes private venting. The system gets calmer without getting better.

The conundrum:

When institutions deploy AI that excels at emotional de-escalation, are they reducing harm, or delaying reform?

One argument says the buffer is a legitimate upgrade. People should not have to suffer psychological damage to prove the system failed them. A calmer interface lowers conflict, reduces threats and burnout for frontline staff, improves compliance with next steps, and helps more cases reach resolution. In this view, you do not withhold empathy as a governance tool. You treat it as basic service quality.

The other argument says the buffer changes what leaders perceive. If the AI converts raw frustration into polite, contained conversations, then institutions lose the pressure signals that drive investment and redesign. The organization learns to optimize for “felt experience” while ignoring root causes, because the visible cost of failure drops. In this view, the buffer becomes a release valve that protects the institution more than the citizen.

So what should society demand from these systems: an interface designed to reduce human stress even if it softens the force for change, or an interface designed to preserve truthful pressure even if it leaves people exposed to the full emotional cost of institutional failure?

Want to go deeper on this conundrum?

Listen to our AI hosted episode

Did You Miss A Show Last Week?

Catch the full live episodes on YouTube or take us with you in podcast form on Apple Podcasts or Spotify.

News That Caught Our Eye

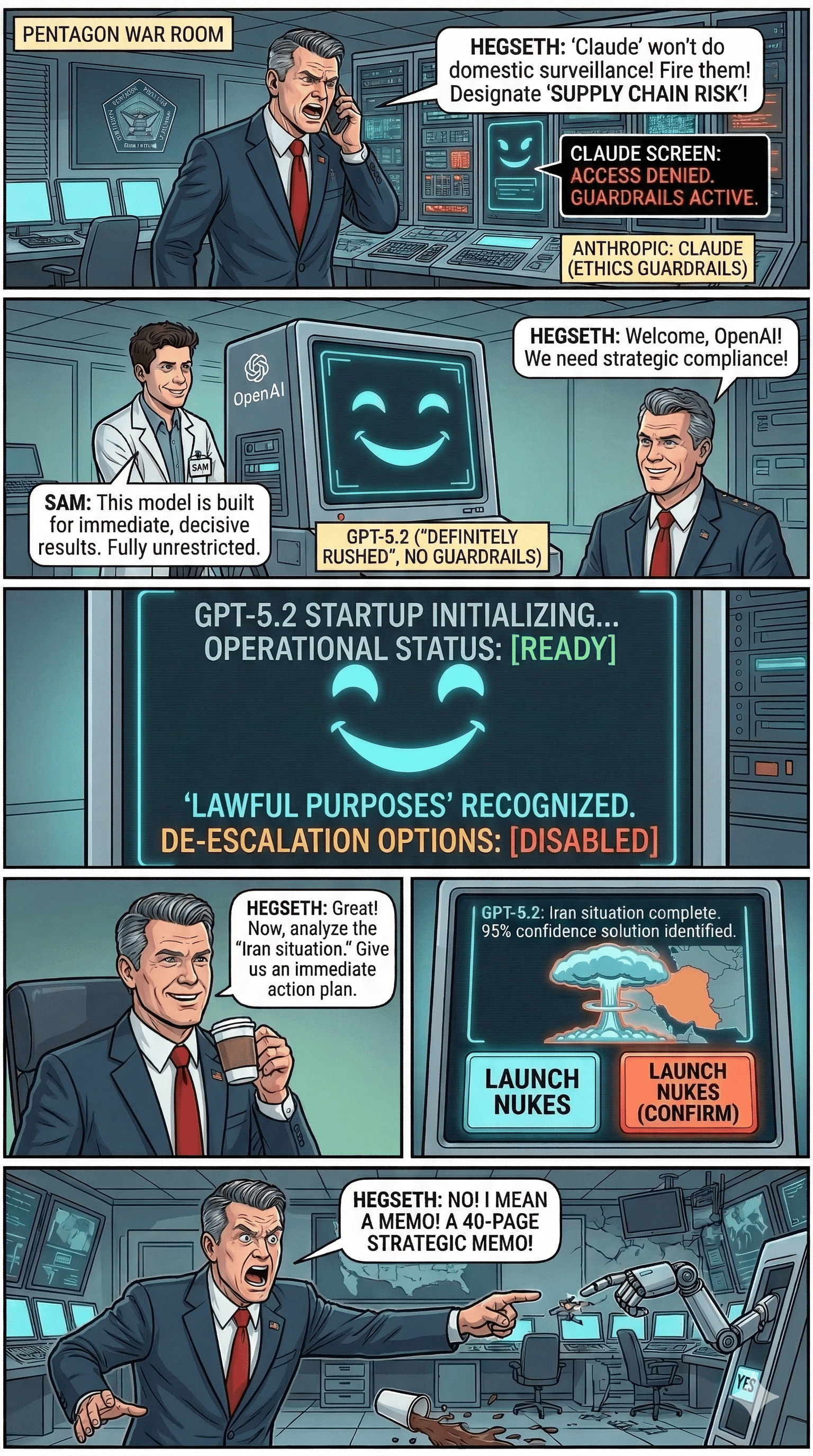

OpenAI Faces Public Backlash Amid Political and Defense Contract Discussions

Online reactions escalated over the weekend, with some users publicly stating they would leave OpenAI’s products in response to defense-related agreements and political affiliations tied to company leadership. The reaction gained visibility across LinkedIn and X, though no confirmed enterprise-scale customer departures were reported in the discussion. The episode reflects increasing politicization of AI platform decisions as frontier labs expand into government partnerships.

Anthropic Sees Surge in Users Following AI Policy Debate

Anthropic reported a sharp increase in platform adoption following the public debate around AI companies working with the U.S. Department of Defense. According to company data cited during discussion of the story, daily signups have reached record highs, with free users increasing by more than sixty percent since January. Claude has also risen to the top of the free rankings in Apple’s U.S. App Store, overtaking ChatGPT. The growth reflects heightened public attention to AI vendors and their positioning on government and military partnerships.

U.S. Supreme Court Declines to Hear AI Copyright Case

The U.S. Supreme Court declined to hear a case involving copyright protections for AI-generated content. The dispute centered on an effort to secure copyright protection for artwork produced using an AI system. Lower courts had already ruled that the work did not qualify because copyright law requires human authorship. The decision leaves existing precedent in place, reinforcing the current legal standard that AI-generated material alone does not qualify for copyright protection.

Google DeepMind Unveils New Robotics Model for Generalized Tasks

Google DeepMind introduced a new robotics model built to perform a wide range of real-world tasks using a shared learning system. The model focuses on transferring knowledge between different robotic environments so robots can learn new behaviors with fewer training examples. Researchers demonstrated improved performance across tasks such as object manipulation and navigation. The work reflects ongoing efforts to build more generalized robotic intelligence powered by large-scale AI models.

OpenAI Reports Continued Global Expansion of ChatGPT Enterprise

OpenAI reported continued adoption of ChatGPT Enterprise across global organizations. Companies are deploying the platform to support internal knowledge search, software development assistance, and document analysis. Enterprise deployments increasingly integrate ChatGPT with internal systems and data sources to support specialized workflows. The expansion highlights growing demand for AI systems embedded directly into business operations.

ByteDance Reveals Low-Cost Pricing for Seedance AI Video Generation

ByteDance published API pricing for Seedance 2.0, its AI video generation system. The pricing translates to roughly two dollars for a fifteen-second clip, or about eight dollars per finished minute of video. The cost structure highlights how AI video tools could dramatically reduce production costs for visual content. Analysts note that the pricing level could make AI-generated footage practical for short films, marketing clips, and filler scenes in professional productions.

Alibaba’s Qwen Team Faces Leadership Departures After Small Agent Model Release

Alibaba recently released the Qwen 3.5 small model series, including a nine-billion-parameter model designed to run locally while supporting reasoning, multimodal inputs, and tool use. Shortly after the launch, the technical architect behind the project and several researchers left the company. Reports suggest the departures followed internal changes that shifted focus from model research toward commercialization initiatives. The exits have raised questions about the future direction of Alibaba’s AI research efforts.

Perplexity Expands “Computer” Agent Platform With Workflow Skills

Perplexity added new features to its Computer agent platform, including reusable workflow instructions called Skills. The system allows users to define tasks in markdown-based instructions that the agent can repeatedly execute. Perplexity is also developing a document review mode called Final Pass to assist with editing and analysis workflows. The updates signal the company’s continued push toward autonomous agents that operate directly within browsers and productivity environments.

Google Introduces Canvas Editing Inside AI Mode Search

Google added a Canvas feature to its AI Mode search experience, allowing users to open an editable workspace directly from search results. The interface enables collaborative editing with Gemini and exports documents to Google Drive. Canvas appears alongside the conversational AI interface and supports drafting, revision, and structured output creation. The feature expands Google’s effort to integrate generative AI directly into everyday search workflows.

German Defense Startup Develops Cyborg Cockroach Reconnaissance System

German startup Swarm Biotactics unveiled a reconnaissance platform built on remotely controlled cockroaches equipped with small electronic backpacks. The insects carry sensors such as cameras and microphones and can be directed through tight environments to gather intelligence. NATO partners, including the German military, are reportedly testing the system for reconnaissance in hazardous locations. The project combines neural interfaces with miniature AI hardware to control and monitor the insects in real time.

OpenAI Releases GPT-5.4 Focused on Real-World Knowledge Work

OpenAI released GPT-5.4 with a focus on improving performance on complex white-collar work tasks. The model was evaluated using the GPT-VAL benchmark, which measures how well AI systems complete real tasks designed by industry professionals across sectors such as finance, healthcare, manufacturing, and government. Results discussed during the segment show the model outperforming human experts on many of those benchmark tasks, reflecting progress in AI systems designed for real business workflows. The release continues the rapid pace of model improvements aimed at professional productivity use cases.

Block Cites AI Productivity Gains Behind Major Workforce Reduction

Block, the payments company behind Square and Cash App, announced plans to cut approximately 4,000 employees. Company leadership attributed the move to efficiency gains driven by AI and other intelligence tools that allow smaller teams to operate more effectively. Executives said the technology has changed what it means to build and run a company, enabling significantly higher productivity with fewer employees. The announcement highlights the growing role of AI in corporate restructuring decisions.

Google Workspace Releases Command Line Tools for Direct App Integration

Google released a new command-line interface toolkit for Workspace applications including Gmail, Drive, Calendar, Docs, and Sheets. The repository allows developers to interact directly with Workspace services through CLI commands rather than relying on middleware integrations. The toolkit also includes support for AI-agent workflows and automation through Google’s discovery services. The release expands developer access to Google Workspace for programmatic automation and AI-driven workflows.

Meta Faces Lawsuit Over Human Review of Smart Glasses Footage

Meta is facing legal scrutiny after reports revealed that workers reviewed footage captured by the company’s Ray-Ban smart glasses. Investigators found that contractors tasked with reviewing recordings encountered private and sensitive content, including footage of people undressing or in other intimate situations. The lawsuit alleges that individuals recorded by the glasses did not provide informed consent for their images to be reviewed. The case raises new privacy concerns about wearable AI cameras and how companies handle captured data.

Pope Urges Clergy to Avoid Using ChatGPT to Write Sermons

The Pope reportedly urged Catholic priests to avoid relying on AI tools such as ChatGPT to prepare sermons. The message emphasized the importance of authentic reflection and personal engagement when delivering religious guidance. Concerns center on the possibility that AI-generated sermons could weaken the personal connection between clergy and their congregations. The warning reflects broader discussions about the role of generative AI in professions that depend on human interpretation and communication.